Different screen resolutions – a simple and comprehensive guide

With a ton of different features and technical add-ons, picking a good monitor for gaming might seem like a lot of work. But the truth is, that the science behind image reproduction and image quality isn’t so hard to understand. And in our opinion, it should be done because it is the only way to bypass the marketing buzz of displays and to see them for what they really are. Understanding the internal functioning of a monitor is the only way to find one that best suits you.

Whether you are an avid gamer, a professional designer or someone who wants to buy a good monitor for media pleasure – there is one basic thing that applies to all of these categories, and that is having a good screen resolution. So let’s break the resolution down into its minor components and understand what it is.

Resolution in a hindsight

If we have to define resolution in a single sentence, we would say that resolution of a monitor is the number of pixels displayed on the monitor screen. This is also measured in PPI (pixels per inch). With that being said, the higher is the resolution, the more number of pixels are displayed on the screen and as a result, the images are sharper and more detailed.

Changing the resolution from high to low leads to magnifying the images on the screen as the number of pixels used in displaying the same images change, and vice versa. Monitors work best at their maximum resolution, also referred to as it’s ‘native resolution.’

What are Pixels?

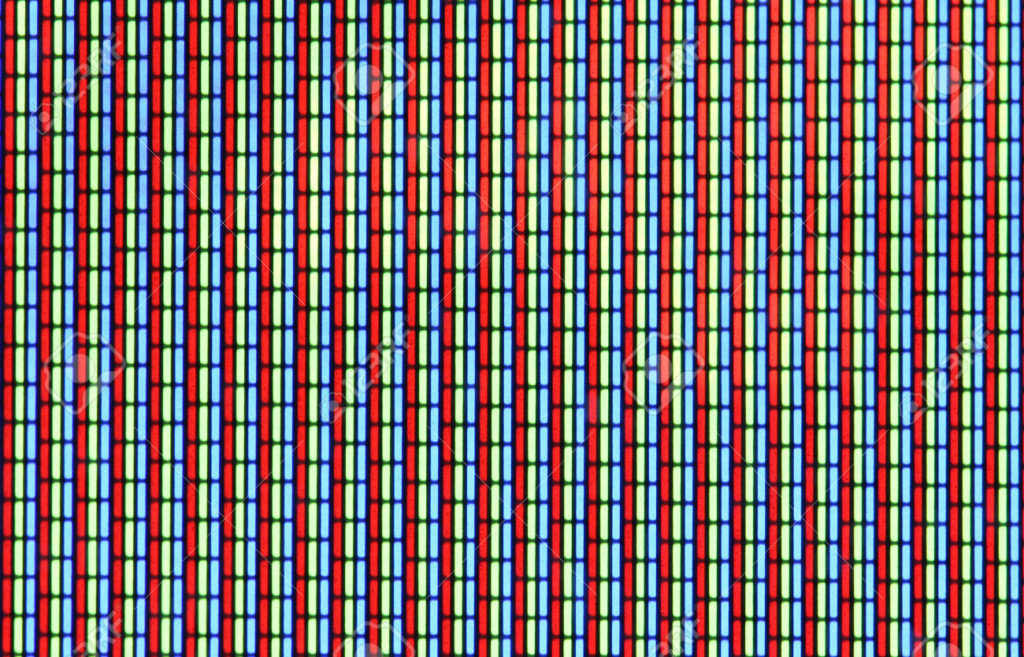

A pixel is a small, illuminated component that has no definite size (the size of a pixel is relevant to the size of the panel and the resolution used on it). It is one of many from which an image is formed. However, in any display, there is a given maximum number of pixels it can display (in other words, the maximum resolution of the monitor). These pixels are physically mounted on the screen during the manufacturing process. The word pixel has been derived from ‘picture element.’

Pixels can be programmed to have a particular color, to compose multicolor images with ease and in great detail.

What is PPI?

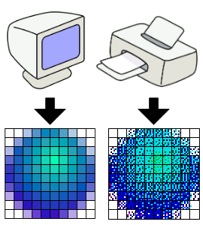

PPI (pixels per inch) is a unit used to measure the ‘pixel density,’ or the resolution of a monitor, or of any other electronic imaging device for that matter. Sometimes PPCM (pixels per centimeter) is also used to measure the same. This can sometimes be confused with DPI (dots per inch) however, as that is slightly different.

What is DPI?

‘Dots per inch’ is a term that is used in the printing of text/media. DPI is the physical dot density of a printed image or the ‘output’ from a device. In this way, the DPI is a different thing from PPI, which is the measurement of pixels per inch on the ‘input’ side of things inside of an imaging device.

What is Aspect Ratio?

Aspect ratio is a term referred to the ratio of the width of an image (or a screen) to its height. It is represented by two numbers that are separated by a colon (for example, x:y).The most commonly used aspect ratios for monitors/displays are 5:4, 4:3, 16:10 and 16:9. The standard aspect ratio used in the current displays is 16:9 (720p screens).

Now that we have established the basics let’s have a look at the most common types of resolutions available in monitors today, how they work and how they make monitors suitable for a specific task. But first, a friendly advice on decision-making.

What NOT to do

While we have established here that the quality of image increases with an increase in screen resolution, this is not enough to seal the deal when it comes to making a purchase. You need to understand that the higher the resolution, the more ‘load’ there will be on the machine. And with that, comes the need for good GPUs and other specifications that make sure you can maintain that image quality during gaming.

As pixel counts climb with an increase in resolution, single-GPU computers may start to stumble. A low-midrange graphics card may technically support a 1440p or higher resolution, but to use it for high-end gaming can be very frustrating. Additionally, LCDs have a native resolution that they work the best at. Gaming or performing other tasks below that resolution can lead to severe degrading of the image quality.

So to put it simply, scaling things up or down is not a good idea to find the right number of pixels for a hassle-free experience that offers quality as well. What this translates into, is that currently, every resolution has a specific best use. So before buying a monitor, have a look at the resolution as well as other features (like a graphics card or adaptive sync support and refresh rate) to make sure you are getting the best thing for you. Now, let’s have a look at different monitor resolutions and their uses.

Different resolutions

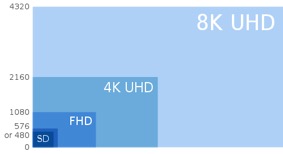

Here are the most popular resolutions used in monitors today. They are represented in a way that shows the amount of pixels used in the width and the height of the viewable screen.

- 1280×720 (HD, 720p)

- 1920×1080 (FHD, Full HD, 1080p)

- 2560×1440 (QHD, WQHD, Quad HD, 1440p monitor)

- 3840×2160 (UHD, Ultra HD, 4K, 2160p)

- 7680×4320 (FUHD, Full Ultra HD, 8K, 4320p)

High Definition

Once the best thing in innovative technology, the 720p displays stand at the bottom of the list today. The native resolution of these displays is 1280×720p. This means that the number of pixels in the High Definition Television (HDTV) resolution is 1280 (horizontally) by 720 (vertically).

The HD resolution stands at a standard for most TV shows, and the best particular application of HD displays is watching media. HD content is digitally broadcasted all around the world, and Blu-Ray discs and HD-DVDs are sold by the millions. These days, 720p displays are very affordable, and this resolution is also used in most cell phones (though some go higher resolution than that). Being High Definition has become a ‘minimum requirement’ of the video content world today.

Full High Definition

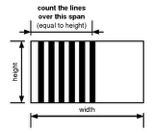

FHD, or also called as the 2k resolution is a resolution of 1920×1080p. This means that the number of ‘horizontal lines’ in the High Definition Television (HDTV) resolution video is 1,080. The ‘lines’ (also called as Television lines) are just a representation of the monitor’s horizontal resolution power. FHD is considered to work best with 24-inch or smaller screens.

Lines of resolution

TVs have used horizontal and vertical lines in the display areas of their systems since the analog TVs that used 525 or 625 lines. These lines are arrays of pixels that are programmed to display and mix colors to create images. Having more number of lines helps a display get more details in the output spectrum. However, this is not something you need to dig deep into to make a buying decision, as looking at the aspect ratio, native resolution, the number of colors and the GPU covers the performance side of things on any display.

FHD typically encapsulates an aspect ratio of 16:9, and implements a resolution that has 2.8 million pixels. Most certainly a delight for light gaming as well as for widescreen media viewing.

Quad High Definition/ Wide Quad HD 1440p

The Quad HD or a horizontal VS vertical pixel count of 2560×1440 has become a standard for high-end monitors. With this type of resolution, we are dipping into high-end gaming (provided that there is adaptive sync support or good complimenting specs) and in cases of smartphones, an experience that is unparalleled. To put it simply, the resolution power of a QHD screen is four times that of a standard 720p HD. This means that you can fit the same number of pixels on one QHD that would usually fit in four HD displays of the same size. Needless to say, the sharpness and color accuracy is increased significantly, and this makes things easier for viewing media on big screens and having high-end gaming setups. WQHD (Wide Quad High Definition) further boosts this pixel count from 2560 to 3440, for a 21:9 aspect ratio. QHD is considered to work best with 27-inch or smaller monitors.

qHD and QHD

QHD (Quad High Definition) can sometimes be confused with qHD (Quarter High Definition) and for good reason. They have a very similar name. ‘qHD’ stands has a display resolution of 960×540 pixels – which is one-quarter of Full HD (1080p) and QHD, on the other hand, has a massive 2560×1440p resolution.

4k Resolution

UHD (Ultra High Definition) or 4K displays is the latest, hottest thing in the world of gaming. But before we get to that, there is a confusion that needs to be cleared out here too. While these two terms go hand in hand, 4K and UHD is not the same thing. The confusion is caused by marketing methodologies used by different brands in the market today. While some brands stick to the term ‘UHD’ when marketing, most of them use 4K and UHD interchangeably. So this information should clear this confusion.

Having a resolution of 3840×2160p (which is four times that of FHD) at an aspect ratio of 16:9, UHDs can be best described as ‘consumer displays.’ 4K, on the other hand, is a ‘production standard’ 4,096×2,160p resolution that is used in digital cinemas and high-end cameras. UHD works best with 27-inch or bigger monitors.

Gaming at ‘4K’

Now it is needless to say that gaming at ‘4K’ is one hell of an experience. But with the kind of picture sharpness and color accuracy this resolution provides, comes the toll on your graphics card. It is said that 4K gaming started as a ‘hobby for the one percent’ of gamers who could afford it. But as time passed, things got more accessible. But while gaming at Ultra High Definition sounds like a dream come true, it is a lot demanding than you might think.

Pushing these many pixels demands a lot out of the complete gaming set-up, most basic things being a good refresh rate and frame synchronization support (it can be AMD’s FreeSync or Nvidia’s G-Sync). If you don’t have these things covered well, you might have a lot of issues like tearing and game crashes. In the early days of 4K gaming, you had to spend well over $1400 on your graphics card, power supply, and the monitor setup. So compared to that, things have got a bit easier.

Now, displays capable of UHD resolutions are available at different prices, but with such a performance oriented machine, you would want to make sure you go with one of the most dependable products in the market.

Take the Asus ROG Swift PG27AQ for example, which is a 27-inch monitor priced at around $900. While this display is considered to be on the expensive side, this is a monitor that covers all the major gaming prospects well. It’s got a refresh rate of 144Hz, and the response time is as low as 1ms. On top of that, it has Nvidia G-sync support. So the PG27AQ can compensate with the pixel-pushing at high speed without problems in ‘quality consistency.’

5K

Right in the middle of 4K and 8K, we have 5K, which is 5120 x 2880p and monitors that have it usually tag these displays as ‘UHD 5K displays.’ Dell’s ‘Ultra HD 5K UP2715K’ is one such example, and Apple iMac MK482HN/A is another. These are exceptionally good with graphics-related work, and if you have some money in your pocket after that 4K budget, you can go for a 5K instead.

Full Ultra High Definition/8K

Now, let’s talk about FUHD, or a resolution of 7680×4320p, which is the highest resolution in the field of digital television and cinematography. It has four times the pixel count as that of the 4K UHD, and hence the name ‘8K.’

8K resolution capability cameras came into existence in the last few years, with NHK and Hitachi demonstrated their 8K cameras at the 2013 NAB Show. NHK (who was working on the 4320p resolution development since 1995) was one of the few companies who were able to manufacture a broadcasting camera that had an 8K image sensor in it in 2015. Other companies like Sony and Red Digital Cinema Camera Company are working on bringing 8K into the market in the upcoming years. But till then, the main use of 8K resolution will only be for filmmakers, who will use it to shoot to get a better output footage quality for 4K. So in a nutshell, if you are interested in filmmaking or post production, you might want to look more into what 8K is and how long it will take for 8K cameras to hit the market. But for a gamer, 8K gaming is a dream at this point of time.

Following this, we have the ‘8K fulldome’ resolution (8192×8192p) which is used as a modern ‘fulldome’ projection for planetariums and the 16K (15360 × 8640p) resolution that we hope to experience in the next 5-15 years.

Samsung announced the world’s first 98-inch 8K (7680 x 4320 pixels) UHD TV monitor in 2014 that has ‘color prime’ technology. Color prime implements a wider spectrum of colors, making it possible to reproduce deeper and more dynamic colors flawlessly, like ocean blue and emerald red. Sharp Corporation also gave out some limited 7680×4320-pixel monitors for sale last year, and many companies are working on 8K as we speak. But at this point, for the everyday man, these monitors aren’t affordable in any sense of the word. It is safe to say that 8K is the future of TV, though.

What’s next

Nvidia and AMD are both working on 8K currently. Richard Huddy, AMD’s chief gaming scientist, has said that they might get to the support for a display resolution of around 8k horizontally and around 6k vertically in the future, with about 48 million pixels in the user’s field of view. And while that would be a ‘life-like’ gaming experience for sure, as far as gaming AND content-viewing monitors currently go, 4K is where we are at this point (with a few expensive exceptions of 5K).

So I hope this article provides you a deep insight on resolutions and the contributing factors that create a gaming/viewing experience. Having a good understanding of technical things and removing common doubts is a good thing to practice before making a buying decision. In today’s world where branding and marketing techniques can be shiny and deceiving, it is ‘every gamer for himself.’

Leave a Reply