Samsung C49HG90 HDR Gaming Monitor

It’s no secret that every member of the community wants to buy into HDR monitors for gaming with the already available models on the market. HDR stands for High Dynamic Range, and its benefits entail improvement in visual qualities which was always one of the focuses in the PC gaming genre. This technology enhances contrast to create the deepest blacks and cleanest whites while pumping up the vibrancy of colors effectively boosting details and luminance for accurate and lifelike image reproduction.

Sadly, development of the technology for PC is still facing hindrances such as compatibility issues that bogged down the growth of HDR in our beloved monitors. There are already a lot of available models promising to provide the mentioned benefits, but the messy implementations, high prices, and lack of support on the software side of things are holding things back. Some offerings worked fine, while others failed in providing improvements, or worse, washed out the image entirely.

What’s the Hold-Up?

The main problem in HDR monitors for gaming is the lack of a uniform formula of specifications which manufacturers should adhere to. Unlike TVs which have the UHD Alliance and the minimum requirements, they set for a device to qualify as an HDR display, ensuring consumers get the best experiences for their money’s worth. Monitor manufacturers, on the other hand, are free to mix and mash specs and call a product an HDR monitor, creating devices with proprietary functions that in a way, refuse to cooperate with HDR media.

On the software side, the problem starts at the operating system level which is the starting point of the whole pipeline. Windows 10 has recurring issues despite the latest update, and successfully activating HDR is usually a hit or miss. Games coded with HDR are limited as well, plus some are using different HDR standards which aren’t compatible with each other. This issue is a more significant threat than the hardware hindrances to the consumers and the rise in popularity of HDR.

What are the HDR Standards?

There are a total of five HDR standards in the market which adds to this seemingly unending chaos. This format war reminds of the VHS versus Betamax days that confused customers who in the end, wasted a ton of money on unusable tapes and hardware. However, for manufacturers, it should be simple enough to create a device that can function will all standards since they were made for a similar goal, right?

Dolby Vision

Dolby Vision is the high-end representative of the lot, since its staggering requirements, licensing premium, and exclusive hardware makes adoption slower and less accessible. Dolby Vision requirements are through the roof with inclusions such as contrast ratio of 200000:1, a brightness target of 4000nits plus 12 –bit color depth and total coverage of the Rec.2020 color space. Dolby Vision also uses dynamic metadata to improve color for each frame, unlike other standards which only provide static metadata.

We agree that Dolby Vision’s demands are over the top, but the brand wants merely the best and most colorful implementation of HDR. But the biggest issue here aside from the additional premium is that monitors will most likely struggle in reaching Dolby Vision’s requirements for it to function completely. Dolby Vision is the equivalent of G-Sync for its exclusive nature in adding improvements for a hefty price.

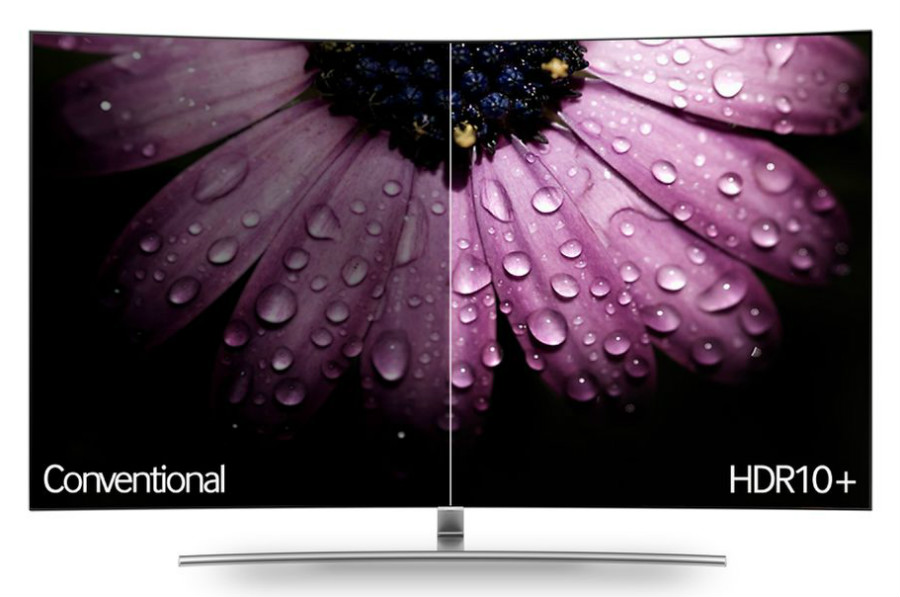

HDR10 and HDR10+

HDR10 is the most popular out of the lot since it is an open standard with reasonable and doable requirements such as 10-bit color and 1000nits of brightness. This format is supported by companies like Sony for the PS4 Pro and Microsoft for the Xbox One S, while brands like Samsung and Amazon are adamantly pushing for this standard and its next improvement since it is easier to adopt for both manufacturers and users.

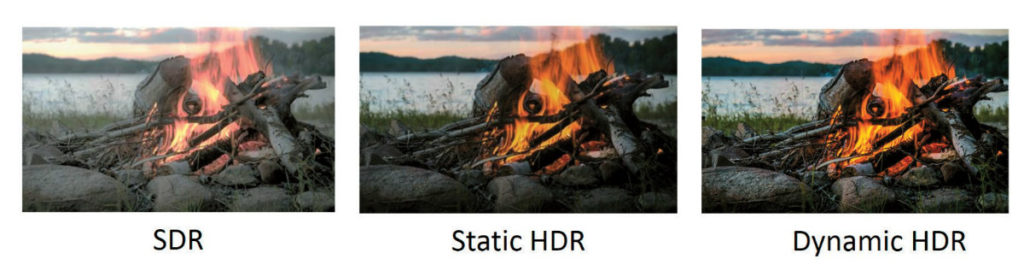

On the other hand, HDR10+ is an improved version of the former by using dynamic metadata instead of applying static data at the start of a video stream as a sort of filter. The latter presents issues when the video progresses through variably illuminated frames which differ from the original one, creating a washed out or murky effect. HDR10+ and its dynamic application of metadata is the answer to the arguments made by Dolby Vision proponents that their chosen standard is entirely superior.

HLG (Hybrid Log Gamma)

Unlike the first three, there is little data available on HLG since it is relatively new and still in its prototypical stages. This spec was developed by the BBC and NHK as a format for live video and films. It differs from Dolby Vision by adding metadata on the fly instead of pre-encoding, ensuring its compatibility with a live feed and a compatible device’s hardware.

HLG is far from becoming mainstream, although it is interesting to note that it is backwards compatible with SDR images if your display isn’t fully capable. This fact alone should make HLG more enticing for programmers and manufacturers, making it easier to develop compatibility and a full range of functionality.

Advanced HDR

Like HLG, Advanced HDR from Technicolor is still in its infancy and the least prominent of the five. Little is known about its capabilities, although its function is primarily for live broadcasts and upscaling SDR to HDR. It’s also designed to be universal across different devices and media, ensuring that TV and monitor manufacturers will include support for it one way or the other.

What’s the Next Best Thing Against HDR Monitors for Gaming?

Right now if you are itching to experience an HDR monitor for gaming, the only way is to spend an arm and a leg’s worth of cash on premium offerings like the Dell UP2718Q. This product is one of the few monitors equipped with sufficiently performing hardware that can implement HDR naturally. However, there are downsides such as latency and limited gaming features, which are expected since this model is designed for professional applications.

Like you, we are waiting for products like the Asus PG27UQ and the Acer X27 which will revolutionize the industry with their 4K 144Hz screens augmented with a FALD backlight for a true HDR output. The sad news is that these products are delayed to Q1 of next year due to delays in panel manufacturing plus the need to tweak and perfect functionality. That’s okay if you ask us because we are sure no one wants to pay $2000 for a flop.

It’s also worth it to remember before pulling the trigger that compatibility is hazy at this time, and it would be difficult to find a game coded for HDR from its core. Although games like Mass Effect Andromeda recently patched Dolby Vision compatibility, there are no compatible HDR monitors save for some high-end OLED displays that can utilize the added feature.

LG OLED55C7P

As an alternative, you can get a TV instead if you have a console like the PS4 Pro or a PC powerful enough for 4K 60Hz gaming. One example is a device from LG’s C6 or C7 series which was patched with a boost to lower input lag to 21ms, which should suffice for gaming at home. These products cost more than the Dell UP2718Q, but they are bigger, and they have OLED which still is just a dream for monitor enthusiasts like us.

Thoughts on HDR Monitors for Gaming

It’s painful for us to say that it isn’t worth it to spend top dollar on an HDR monitor for gaming because of the mess made by the burgeoning demand and the race to answer them. It’s also against our wishes to recommend a TV over a PC display for this purpose, but chances are, you might enjoy it more. The current mishmash of attempts to offer faux HDR from manufacturers doesn’t help either because instead of helping to push for full compatibility, they are adding confusion.

But on a positive note, these attempts will eventually culminate into refinement which will enable HDR monitors to become mainstream. The road is rough with roadblocks, but eventually, the market, manufacturers, and programmers will mature and come together. So our advice is to wait if you can despite the frustrating delays because more and more HDR monitors for gaming will arrive shortly.

JEFF SPECK says

This is a great eye opening article..Thankyou.I have a Asus Rig 1080ti 011g card and I was wondering whether it was worth waiting for the upcoming Asus pg35vq with her and could my single 1080ti cope with this MONITOR at its top 200 HZ REFRESH rate@?Thankyou.

Paolo Reva says

Hi, Jeff! Thank you for the compliments and your question regarding the 1080 Ti. Based on current benchmarks of this GPU at 3440 x 1440, a single card isn’t enough to maximize the Asus PG35VQ. The GTX 1080 Ti is a very powerful card but on some graphics-heavy games like Battlefield 1, we recorded an FPS range of only 75 to 90. To reach a higher FPS count, you might need to add a second unit or wait for the next generation of GPUs. You can also raise framerates by optimizing graphics settings, depending on how you want to play your chosen games.